The Prompt Journal

Jaydev Gusani

Not prompt history.

A retrieval layer for long-context dialogue.

The Mismatch

A session can run 200,000 tokens.

A novel’s worth of reasoning, synthesis, back-and-forth.

Want to return to something from earlier?

On desktop, you open browser find.

A utility built for static pages.

Not live conversations.

On mobile, there is no find.

You scroll

you scroll

and hope.

The model remembers everything.

The interface surfaces nothing.

The Cost

This is not a minor inconvenience.

It is retrieval failure at the moment of highest intent.

You were in flow.

You needed something.

The system had it.

You could not get to it.

So you asked again.

The model regenerated an answer it had already given.

You paid twice.

In time.

In tokens.

In attention.

That is not user error.

That is a structural gap.

The Pattern

Every long-form medium has retrieval.

Books have page numbers, Codebases have search.

Documents have headings, Email has threads.

AI conversation may be the only long-form medium without a native memory layer.

Not because it is difficult.

Because nobody built one.

Some attempts have moved in this direction, borrowing outline rails and minimaps from note-taking software.

A meaningful step.

But inherited metaphors. Useful for documents. Not native to conversation.

Project Colossus

multi-gigawatt scale.

Paired with a borrowed document minimap.

The metaphor is inherited

The Proposal

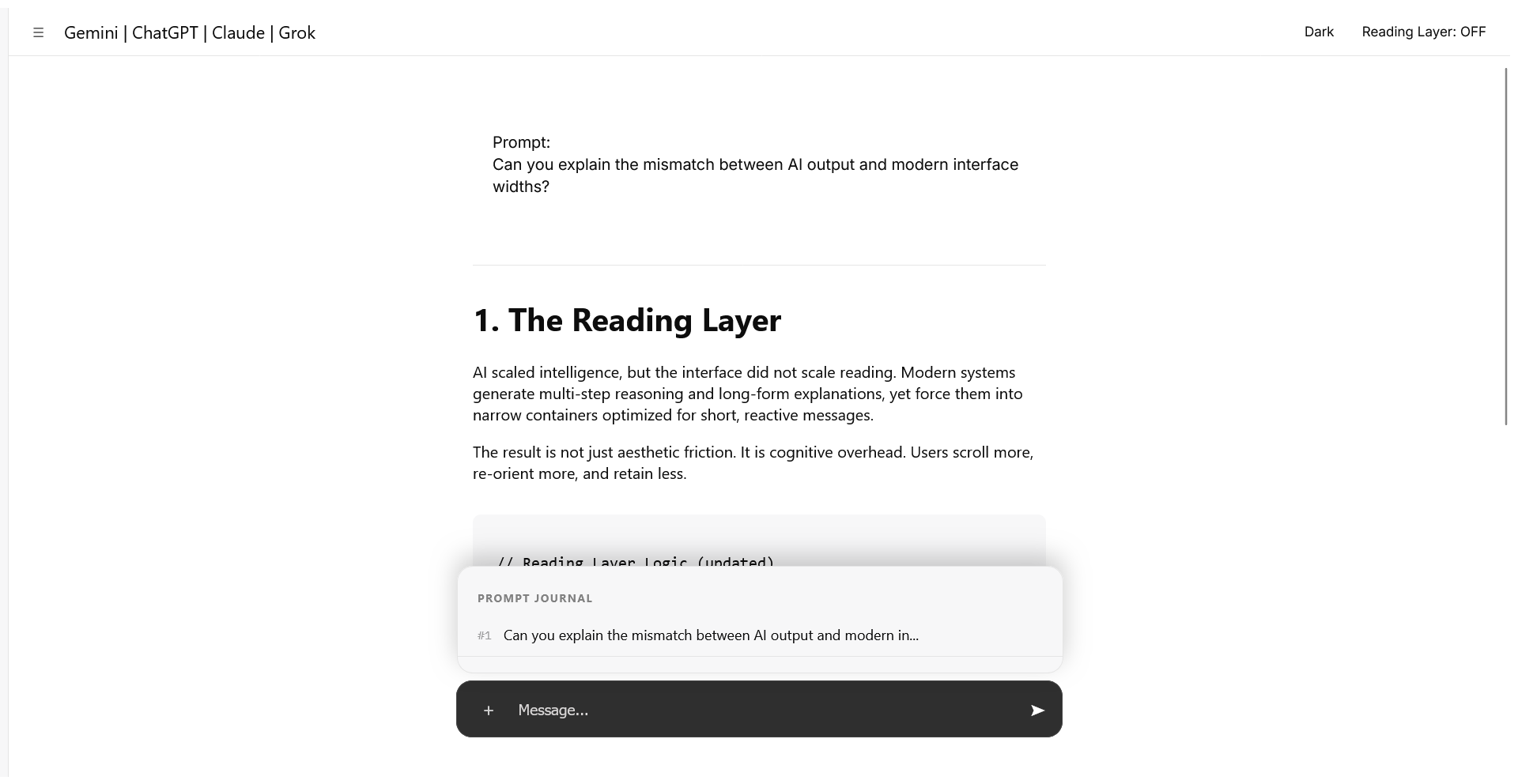

The Prompt Journal.

A drawer rising from the composer.

The one surface every AI interface shares.

The one place user attention already lives.

Prompt number, prompt preview.

Tap.

Jump to that moment in the thread.

Not search.

Not branching trees.

Orientation.

Prompts become anchors.

Conversation gains landmarks.

Scrollable.

Lightweight.

No model changes.

No new pipeline.

One interaction pattern.

Platform agnostic.

The Language

This Respects what already exists.

For conversational systems, the pattern emerges through the composer itself.

ChatGPT, Gemini, Claude, Grok can surface it through the input bar or plus menu.

where additive actions already live.

For agentic and coding systems, the same principle holds.

Claude Code, Codex, Cursor

can expose it through command palettes, prompt drawers,

or editor-adjacent panels native to their environment.

Different surfaces.

One retrieval primitive.

One interaction pattern.

Infinite expressions.

The model changes nowhere.

The interface changes once.

The Pair

The Reading Layer addressed how intelligence is displayed.

The Prompt Journal addresses how intelligence is retrieved.

Display and retrieval.

Comprehension and return.

Two halves of the same problem.

Individually useful.

Mutually amplifying.

A reading layer.

A memory layer.

Structural primitives for long-context systems.

Not features.

Foundations.

The Outcome

When users can return, conversations become accumulative.

Less repetition.

Less context rebuilding.

Less forgetting through scrolling.

Dialogue stops behaving like transient output.

It begins behaving like working memory.

Chat becomes less disposable.

Closer to study.

Closer to collaboration.

The system remembers.

Now the user can too.

The Position

We scaled context.

We did not scale navigation.

The gap is not in the model.

It is in the interface.

the cost to fix it is small.

The cost of ignoring it is not.

The model is not the bottleneck.

It never was.